Super-resolution reconstruction of retinal OCT image using multi-teacher knowledge distillation network

-

摘要:

光学相干断层成像(OCT)广泛应用于眼科诊断与辅助治疗,但其成像质量不可避免地受到散斑噪声和运动伪影影响。本文提出了一种针对OCT超分辨率任务的多教师知识蒸馏网络MK-OCT,使用不同优势的教师网络训练平衡、轻量级和高效的学生网络。MK-OCT中高效通道蒸馏方法ECD的使用也使得模型能够更好地保留视网膜图像的纹理信息,满足临床需要。实验结果表明,与经典超分辨率网络相比,本文所提模型在重建精度和感知质量两个方面均表现优异,模型尺寸更小,计算量更少。

Abstract:Optical coherence tomography (OCT) is widely used in ophthalmic diagnosis and adjuvant therapy, but its imaging quality is inevitably affected by speckle noise and motion artifacts. This article proposes a multi teacher knowledge distillation network MK-OCT for OCT super-resolution tasks, which uses teacher networks with different advantages to train balanced, lightweight, and efficient student networks. The use of efficient channel distillation method ECD in MK-OCT also enables the model to better preserve the texture information of retinal images, meeting clinical needs. The experimental results show that compared with classical super-resolution networks, the model proposed in this paper performs well in both reconstruction accuracy and perceptual quality, with smaller model size and less computational complexity.

-

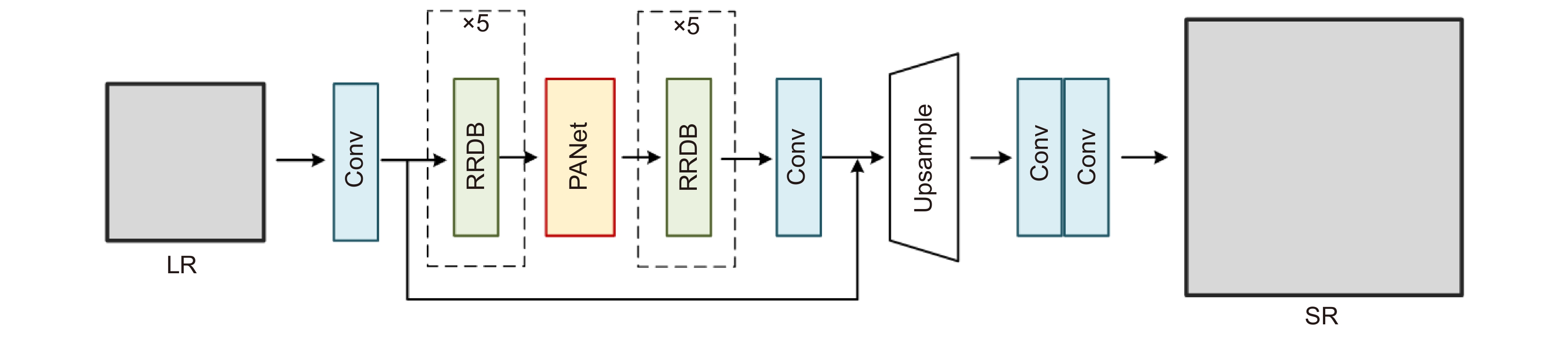

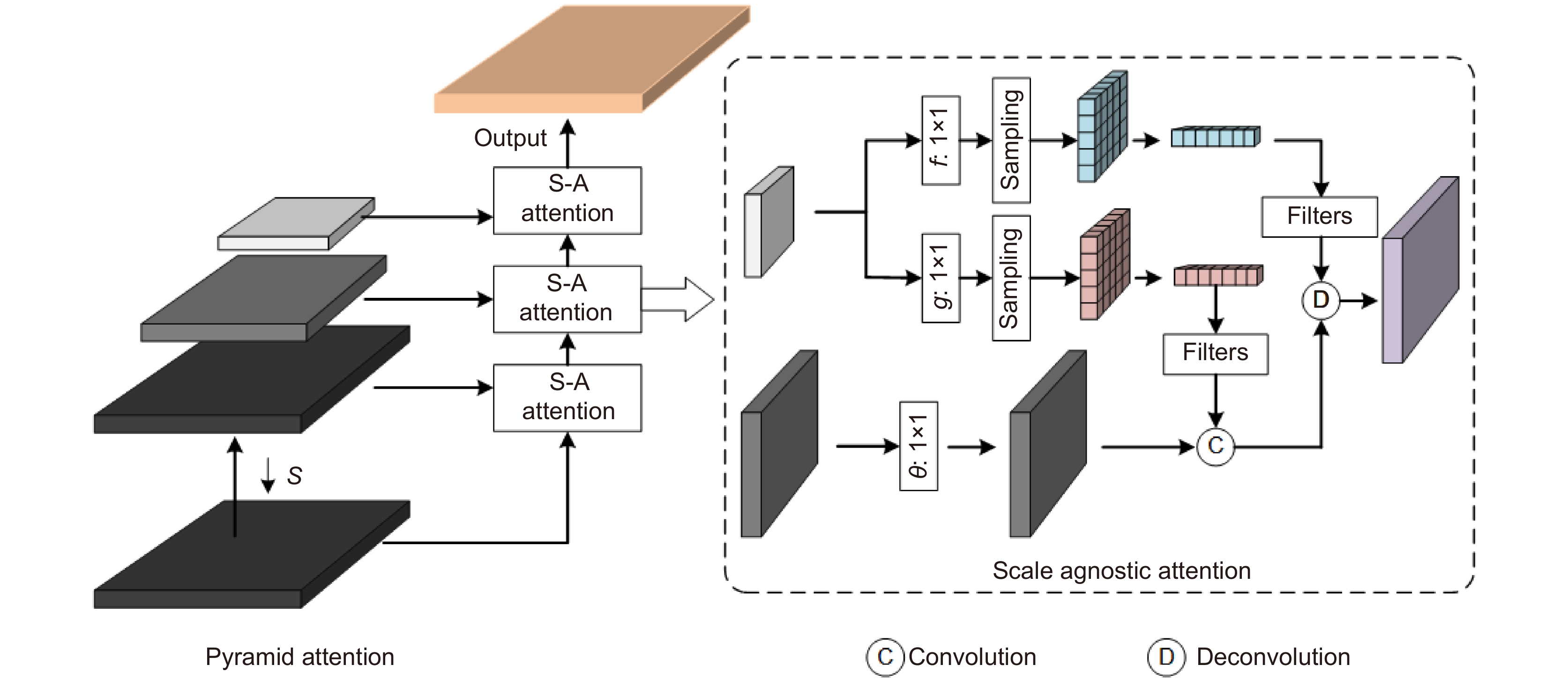

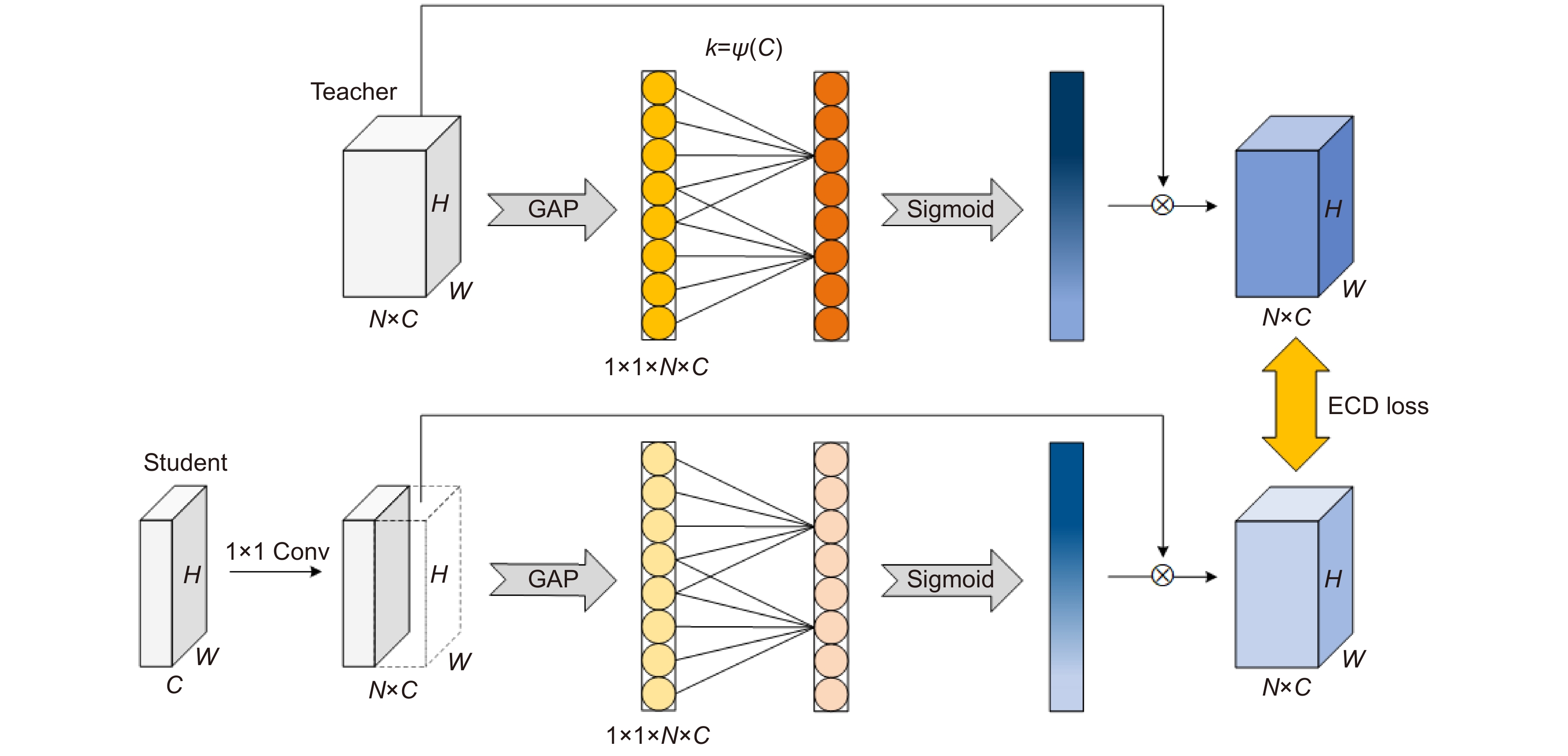

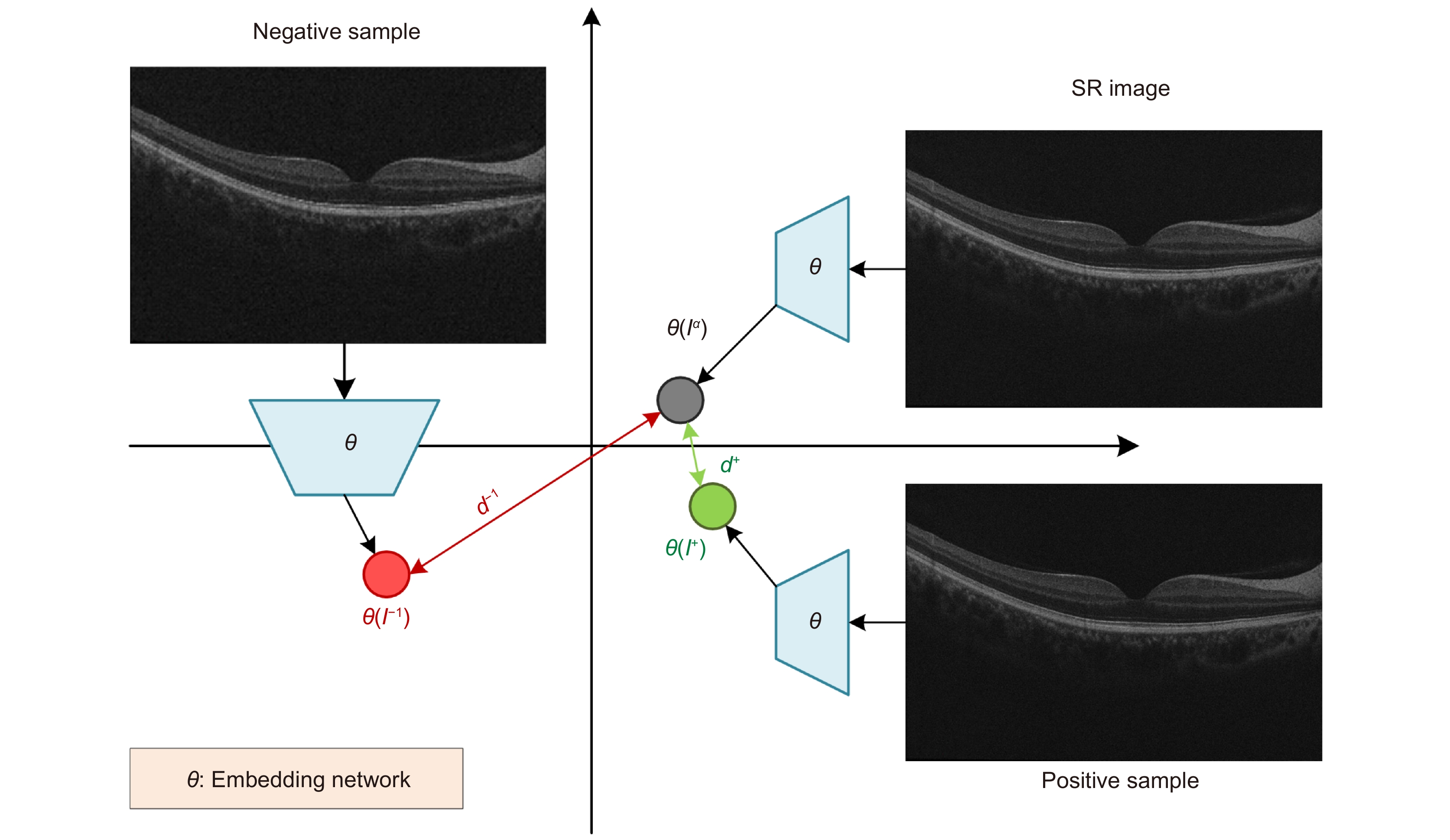

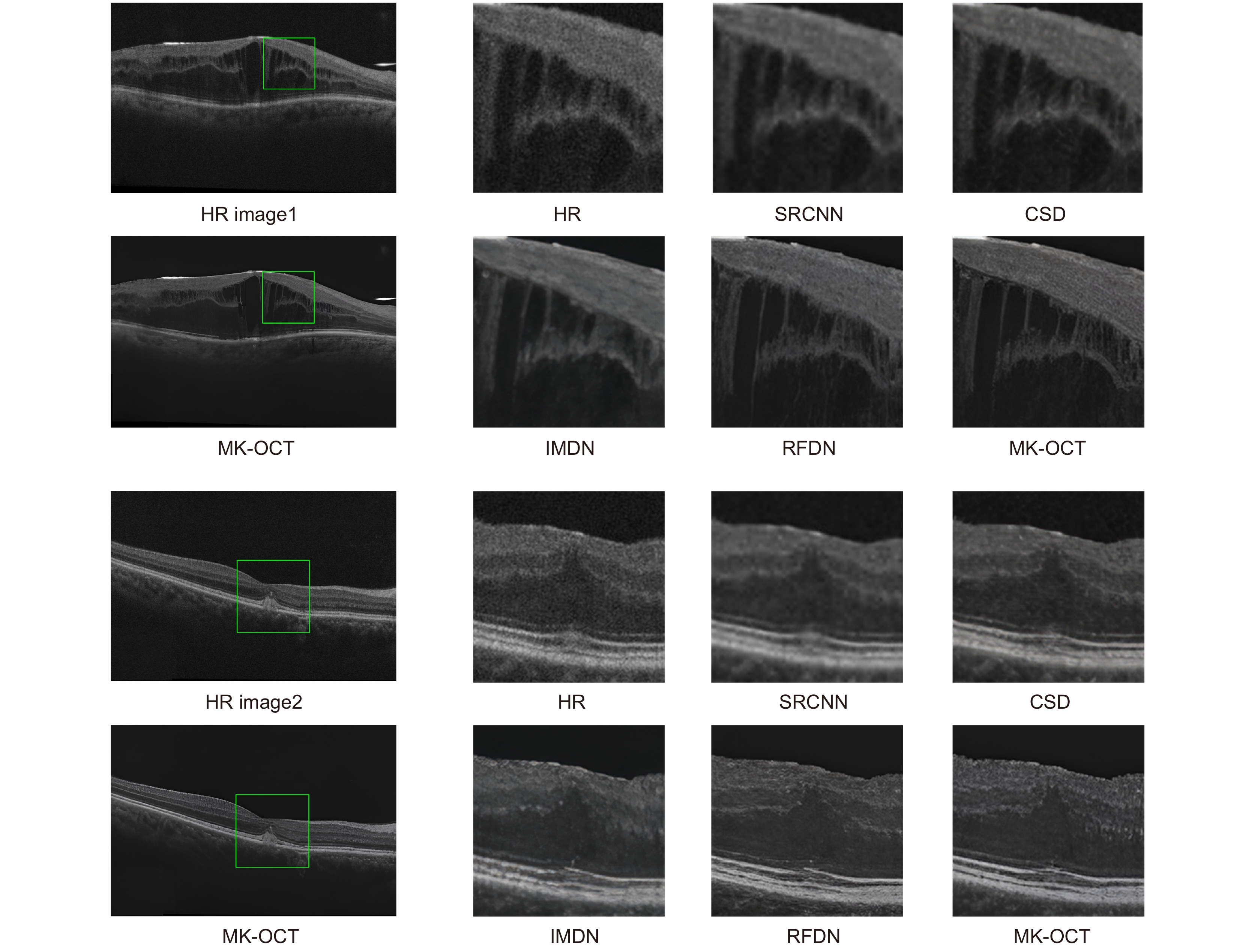

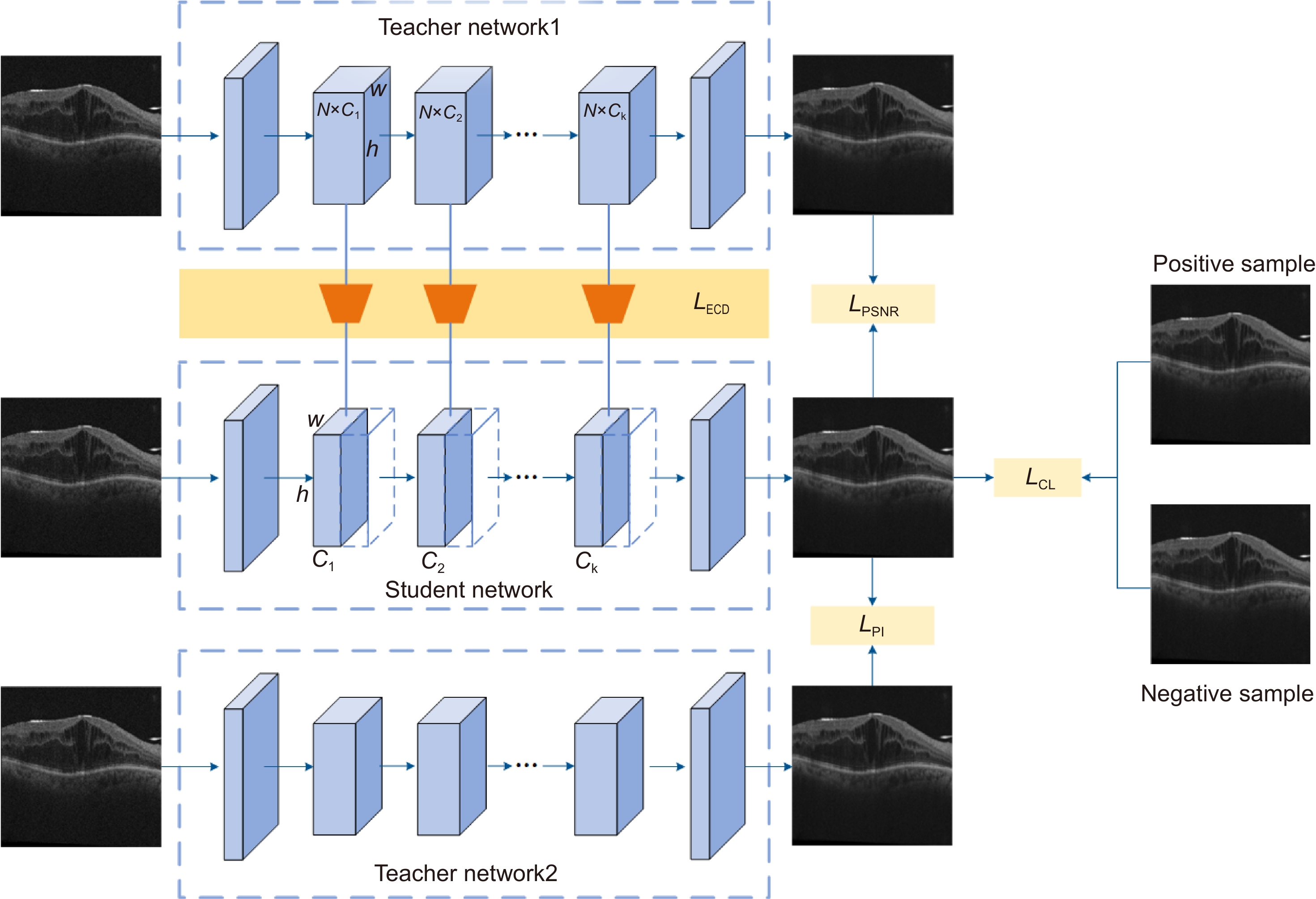

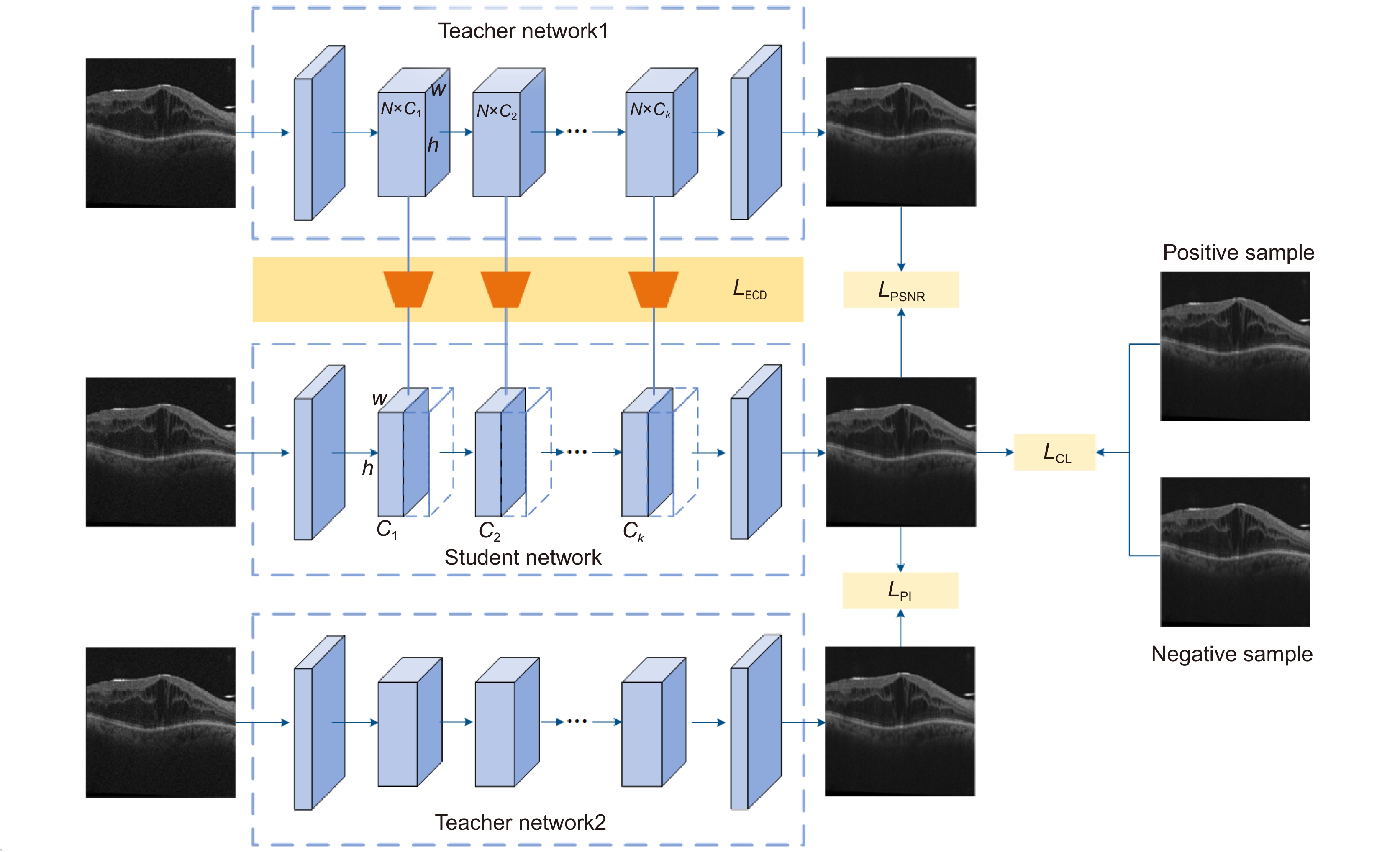

Overview: Optical coherence technology (OCT), which is widely used in the diagnosis of ophthalmic diseases, can reconstruct three-dimensional cross-sectional images inside biological tissues through the mutual interference of weakly coherent light. However, due to the inevitable scattering of weakly coherent light when it enters the tissue, there is speckle noise in the OCT retinal image, which covers up the subtle and very important details in the image. Secondly, unconscious movements such as eye movements (drift, tremors, and micro jumps), head movements, and cardiopulmonary system during the image acquisition process can lead to artifacts in OCT images, affecting clinical diagnosis and interfering with subsequent automated analysis of images. To solve the problem of existing OCT super-resolution networks being solely focused on reconstruction accuracy and perceptual quality, reduce the model complexity of the network, and be more suitable for clinical applications, this paper proposes a multi teacher knowledge distillation network MK-OCT for OCT image super-resolution. Through knowledge distillation, the student network can combine the different abilities of the teacher network to achieve balance, lightweight, and efficiency. At the same time, an efficient channel distillation method ECD was proposed, which enables the student network to extract rich channel attention information from the middle layer of the teacher network and transmit it to the middle layer of the student network in the form of a loss function, improving model performance without increasing the parameters and computational complexity of the student network. During the training process, both the student network and the teacher network use low-resolution images as input, and after the three networks respectively obtain reconstructed images, different loss functions are used to calculate the loss between the output images of each network. This allows the student network to simultaneously learn both reconstruction accuracy and perceptual quality from the two teacher networks. In addition, the student network additionally uses contrastive learning, which can provide external knowledge with upper and lower bounds, reducing the optimization space for the OCT image super-resolution task, thereby further improving the performance of the student network. We compared our model to five classic lightweight super-resolution reconstruction models, namely SRCNN, CSD, IMDN, and RFDN. Experiments have verified the effectiveness and superiority of MK-OCT in OCT image super-resolution reconstruction. At the same time, our research group also conducted ablation experiments, which further confirmed the effectiveness of multi teacher knowledge distillation. The generalization performance experiment also proves that the MK-OCT model has a good generalization ability.

-

-

表 1 各种超分辨率模型在4倍重建后的平均指标

Table 1. Average performance of various super-resolution models after x4 reconstruction

Method Size

/MBFLOPs

/GDataset 1 Dataset 2 PSNR SSIM LPIPS PI PSNR SSIM LPIPS PI Bicubic - - 28.12 0.7811 0.412 6.795 28.43 0.7730 0.422 6.579 SRCNN 0.2 0.23 28.59 0.8003 0.404 6.355 28.79 0.7986 0.398 6.297 CSD 12.16 122.1 30.95 0.8142 0.310 5.677 30.90 0.8119 0.327 5.802 IMDN 2.65 41.9 31.24 0.8217 0.226 5.553 31.21 0.8220 0.230 5.608 RFDN 1.59 32.0 31.67 0.8262 0.220 5.217 31.78 0.8217 0.217 5.139 MK-OCT (Ours) 1.41 29.8 32.93 0.8460 0.149 4.521 32.90 0.8443 0.143 4.443 表 2 不同条件的学生网络在4倍重建后的定量评估

Table 2. Quantitative evaluation of student networks under different conditions after x4 reconstruction

Dataset Metric HR-SMK Single-teacher None-CL TPSNR TPI Dataset 1 PSNR 31.27 32.88 32.77 32.88 SSIM 0.8238 0.8459 0.8396 0.8457 LPIPS 0.230 0.217 0.142 0.150 PI 5.593 5.440 4.457 4.608 Dataset 2 PSNR 31.33 32.87 32.81 32.86 SSIM 0.8178 0.8424 0.8411 0.8420 LPIPS 0.214 0.209 0.140 0.148 PI 5.146 5.129 4.561 4.670 表 3 新数据集上各种超分辨率模型的平均PSRN和PI值

Table 3. Average PSRN and PI values of various super-resolution models after reconstruction

Method PSNR PI ×2 ×4 ×2 ×4 SRCNN 33.67 28.79 4.667 6.033 CSD 34.22 29.98 4.109 5.820 IMDN 35.90 31.06 4.233 5.709 RFDN 35.89 31.77 4.059 5.455 MK-OCT (Ours) 36.20 32.58 3.979 5.103 -

[1] 陆冬筱, 房文汇, 李玉瑶, 等. 光学相干层析成像技术原理及研究进展[J]. 中国光学, 2020, 13(5): 919−935. doi: 10.37188/CO.2020-0037

Lu D X, Fang W H, Li Y Y, et al. Optical coherence tomography: principles and recent developments[J]. Chin Opt, 2020, 13(5): 919−935. doi: 10.37188/CO.2020-0037

[2] Huang Y Q, Lu Z X, Shao Z M, et al. Simultaneous denoising and super-resolution of optical coherence tomography images based on generative adversarial network[J]. Opt Express, 2019, 27(9): 12289−12307. doi: 10.1364/OE.27.012289

[3] Das V, Dandapat S, Bora P K. Unsupervised super-resolution of OCT images using generative adversarial network for improved age-related macular degeneration diagnosis[J]. IEEE Sensors J, 2020, 20(15): 8746−8756. doi: 10.1109/JSEN.2020.2985131

[4] Qiu B, You Y F, Huang Z Y, et al. N2NSR‐OCT: simultaneous denoising and super‐resolution in optical coherence tomography images using semisupervised deep learning[J]. J Biophotonics, 2021, 14(1): e202000282. doi: 10.1002/jbio.202000282

[5] 芦焱琦, 陈明惠, 秦楷博, 等. 基于金字塔长程Transformer的OCT图像超分辨率重建[J]. 中国激光, 2023, 50(15): 1507107. doi: 10.3788/CJL230624

Lu Y Q, Chen M H, Qin K B, et al. Super-resolution reconstruction of OCT image based on pyramid long-range transformer[J]. Chin J Lasers, 2023, 50(15): 1507107. doi: 10.3788/CJL230624

[6] 柯舒婷, 陈明惠, 郑泽希, 等. 生成对抗网络对OCT视网膜图像的超分辨率重建[J]. 中国激光, 2022, 49(15): 1507203. doi: 10.3788/CJL202249.1507203

Ke S T, Chen M H, Zheng Z X, et al. Super-resolution reconstruction of optical coherence tomography retinal images by generating adversarial network[J]. Chin J Lasers, 2022, 49(15): 1507203. doi: 10.3788/CJL202249.1507203

[7] Ma Y H, Chen X J, Zhu W F, et al. Speckle noise reduction in optical coherence tomography images based on edge-sensitive cGAN[J]. Biomed Opt Express, 2018, 9(11): 5129−5146. doi: 10.1364/BOE.9.005129

[8] 汪荣贵, 雷辉, 杨娟, 等. 基于自相似特征增强网络结构的图像超分辨率重建[J]. 光电工程, 2022, 49(5): 210382. doi: 10.12086/oee.2022.210382

Wang R G, Lei H, Yang J. Self-similarity enhancement network for image super-resolution[J]. Opto-Electron Eng, 2022, 49(5): 210382. doi: 10.12086/oee.2022.210382

[9] Ma C, Rao Y M, Cheng Y, et al. Structure-preserving super resolution with gradient guidance[C]//Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020: 7766–7775. https://doi.org/10.1109/CVPR42600.2020.00779.

[10] Park S J, Son H, Cho S, et al. SRFeat: single image super-resolution with feature discrimination[C]//Proceedings of the 15th European Conference on Computer Vision, 2018: 455–471. https://doi.org/10.1007/978-3-030-01270-0_27.

[11] Wang X T, Yu K, Wu S X, et al. ESRGAN: enhanced super-resolution generative adversarial networks[C]//Proceedings of the 15th European Conference on Computer Vision, 2018: 63–79. https://doi.org/10.1007/978-3-030-11021-5_5.

[12] Yao G Q, Li Z, Bhanu B, et al. MTKDSR: multi-teacher knowledge distillation for super resolution image reconstruction[C]//Proceedings of the 2022 26th International Conference on Pattern Recognition (ICPR), 2022: 352–358. https://doi.org/10.1109/ICPR56361.2022.9956250.

[13] Shu C Y, Liu Y F, Gao J F, et al. Channel-wise knowledge distillation for dense prediction[C]//Proceedings of 2021 IEEE/CVF International Conference on Computer Vision, 2021: 5291–5300. https://doi.org/10.1109/ICCV48922.2021.00526.

[14] Zhao T L, Hu L, Zhang Y M, et al. Super-resolution network with information distillation and multi-scale attention for medical CT image[J]. Sensors, 2021, 21(20): 6870. doi: 10.3390/s21206870

[15] Wang Q L, Wu B G, Zhu P F, et al. ECA-Net: efficient channel attention for deep convolutional neural networks[C]// Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020: 11531–11539. https://doi.org/10.1109/CVPR42600.2020.01155.

[16] Zhou Z D, Zhuge C R, Guan X W, et al. Channel distillation: channel-wise attention for knowledge distillation[Z]. arXiv: 2006.01683, 2020. https://doi.org/10.48550/arXiv.2006.01683.

[17] Yoo J, Ahn N, Sohn K A. Rethinking data augmentation for image super-resolution: a comprehensive analysis and a new strategy[C]//Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020: 8372–8381. https://doi.org/10.1109/CVPR42600.2020.00840.

[18] Dong C, Loy C C, He K M, et al. Learning a deep convolutional network for image super-resolution[C]//Proceedings of the 13th European Conference on Computer Vision, 2014: 184–199. https://doi.org/10.1007/978-3-319-10593-2_13.

[19] Hui Z, Gao X B, Yang Y C, et al. Lightweight image super-resolution with information multi-distillation network[C]//Proceedings of the 27th ACM International Conference on Multimedia, 2019: 2024–2032. https://doi.org/10.1145/3343031.3351084.

[20] Liu J, Tang J, Wu G S. Residual feature distillation network for lightweight image super-resolution[C]//Proceedings of the 16th European Conference on Computer Vision, 2020: 41–55. https://doi.org/10.1007/978-3-030-67070-2_2.

[21] Wang Y B, Lin S H, Qu Y Y, et al. Towards compact single image super-resolution via contrastive self-distillation[C]// Proceedings of the Thirtieth International Joint Conference on Artificial Intelligence, 2021: 1122–1128. https://doi.org/10.24963/ijcai.2021/155.

[22] Bogunović H, Venhuizen F, Klimscha S, et al. RETOUCH: the retinal OCT fluid detection and segmentation benchmark and challenge[J]. IEEE Trans Med Imaging, 2019, 38(8): 1858−1874. doi: 10.1109/TMI.2019.2901398

-

E-mail Alert

E-mail Alert RSS

RSS

下载:

下载: